How harmful is AI in healthcare?

How can we regulate healthcare AI to get the best out of it and prevent harm?

This is a newsletter of Faces of Digital Health - a podcast that explores the diversity of healthcare systems and healthcare innovation worldwide. Interviews with policymakers, entrepreneurs, and clinicians provide the listeners with insights into market specifics, go-to-market strategies, barriers to success, characteristics of different healthcare systems, challenges of healthcare systems, and access to healthcare. Find out more on the website, tune in on Spotify or iTunes.

There are (at least) three concerns I have with AI in healthcare:

Over-reliance on AI insights by both clinicians and patients

Added pressure on patients to be informed, “empowered” and self-managing

Patients falling through the cracks due to over-automation, with no human intermediary to intervene when something goes wrong

In this newsletter I will present these concerns through personal examples. I’m complimenting the lived experience with expert insights about ethical considerations with AI and current regulatory ideas, which were covered on the Faces of digital health podcast. Scroll down based on your interest!

Concern 1: Over-reliance on insights from both clinicians and patients

Two years ago, I experienced intense chest pain. After my GP ruled out cardiovascular causes, I was referred to a gastroenterologist since reflux other conditions cause heartburn (a burning sensation in the chest). The wait time for my appointment was two months and during that period, the pain persisted in waves. I was scared, to put it mildly. I turned to Google and ChatGPT to find clarity, and from what I read, gastritis seemed to best match my symptoms.

When I finally saw the specialist and he asked, “What brings you here today?” I blurted out, eager to be efficient and informed, “Gastritis!”

He looked at me with a raised eyebrow and replied, “Says who?”

That question hit me. It was a fair one. It made me realise how shaky my self-diagnosis actually was, despite the fact that I knew AI can hallucinate and you should never take its responses for granted.

I appreciated the doctor’s calm follow-up: that we’d need to run several tests before drawing any conclusions. We never did find a definitive cause. But it wasn’t gastritis and eventually, the pain faded away. Two years later, my skills for searching answers seemed to have improved, at least on the situation I’m about to describe.

Concern 2: Added pressure on patients to be informed and “empowered”

I’ve lived with inflammatory bowel disease for over 20 years, and right now I’m in the middle of one of the worst flare-ups I’ve ever had. Since first line of medications didn’t help, we had to significantly change my medications. When I spoke to my doctor, I knew I will likely need to get biologics (a class of medical products derived from living organisms or their components, that target specific pathways in the immune system or other biological processes) but I didn’t know much more than that.

When I finally got a specialist appointment, it left me stunned. Not because of what the doctor said, but because of what he didn’t say. He didn’t give a lecture. He didn’t tell me what he was going to prescribe. Instead, he looked me in the eye and asked, “So, which medications do you want?”

I was caught off guard.

Treatment protocols have changed significantly over the past two decades, and I’m not a doctor. However, before the appointment, I had done my homework. I’d had a long “conversation” with ChatGPT, feeding it several prompts that described my situation. This is how it went down.

How to prompt for patient research

I asked ChatGPT for guidance through the following prompts, constantly keeping in mind that for valuable responses you need to be as detailed as possible in prompting. This was easy, since who knows the patient’s history, bad experiences and problems better than the patient? These were my prompts:

“You are a gastroenterologist. This is your patient: [input patient details – current problems, current therapies, past therapies]. What escalation steps would you take?”

The result was a detailed list of diagnostic procedures, lab tests, and medication options. I followed up with another prompt:

“Create a timeline of suggested next steps, with expected duration for each potential therapy. Keep in mind the patient previously discontinued [therapy] due to [side effect], and stopped [therapy name] after 8 years of use. Provide instructions for the patient including diet, exercise, and other relevant recommendations. How do you choose between biologic therapies? What background can you give the patient, and what choices can you offer?”

That gave me an overview of available therapies, a comparison table, and loads of useful context. I asked one more thing:

“Based on the discussion above, create patient instructions.”

This turned details into an easy to digest lay language.

This 15-minute AI “consultation” gave me a solid understanding of where treatments currently stand. I also did some fact checking with Evidence Hunt - a reliable source of medical information meant for clinicians.

Regardless of this research, I kept in mind my chest pain experience, reminding myself that I’m not a doctor and shouldn’t feel too confident about the results I got. But I felt prepared for the doctor’s appointment, ready to ask follow-up questions, and fact check my research with the specialist.

So back to the doctor’s office and his question, which medications I want.

I cautiously suggested the therapy I liked most based on my AI research. The doctor briefly walked me through other options, but confirmed that my suggestion was worth giving a try.

I left the appointment feeling hopeful about the new therapy, proud about my research that seemed to have been solid this time around, but also wondering: Is this what medical appointments are now? Do patients show up with pre-conceived ideas based on AI research? Are doctors expecting us to do that level of prep in advance?

Patients Need Project Management and Digital Skills

Years ago, Graham Prestwich, Patient and Public Engagement Lead at Health Innovation Yorkshire & Humber, wrote a worthwhile read about “patients being project managers.”

This means that if you’re a good project manager, your chances are far better for good outcomes then if you’re not. If we expect patients to be a more important player in improved outcomes, we also need to equip them with the right tools - like project management skills, and in the age of digital health, strong digital and AI literacy.

I worry that patient outcomes increasingly depend on how engaged, informed, and assertive people are in advocating for themselves—right down to reminding clinicians about tests or procedures that may have been overlooked.

I am eternally grateful for the clinicians that take care of me, but I also see how increasingly overburdened the healthcare system is. I’m glad I’m still communicating with the human GP, not an automated system. Here’s why.

Concern 3: Patients falling through the cracks due to over-automation, with no human to intervene

Already back in 2021, WIRED reported the story of a woman who was denied pain medication for severe endometriosis-related chronic pain. She later discovered that an algorithm, which was part of a system designed to combat opioid abuse, had flagged her as a “drug shopper”: someone trying to obtain multiple prescriptions for controlled substances from different sources.

However, the reason for the apparent overuse wasn’t what the algorithm assumed. Her dogs had been prescribed opioids, benzodiazepines, and even barbiturates by their veterinarians. And prescriptions for animals are typically issued under the owner’s name.

The algorithm didn’t know that. It only saw multiple prescriptions in her name—and nobody intervened to double-check.

These examples point to a growing tension: as AI tools become more integrated into healthcare, we need to make sure they’re supporting—not replacing—human judgment, empathy, and care. Empowerment should never become a burden. And automation should never mean abandonment.

The Ethical Perspective on AI in healthcare

Dr Jessica Morley is Associate Research Scientist at Yale University Digital Ethics Center. At HIMSS25 Europe she had a short lecture titled: When Data Turns Dangerous: The Risks of Bias and Misuse in Healthcare AI. Two of her concepts stuck with me:

AI is not enabling personalized medicine, instead what’s actually happening resembles targeted advertising, where people are clustered into groups based on their characteristics.

Bias is not just a data imbalance, and can’t be solved only by addressing the diversity of datasets. Even with more data, systemic issues like racism, mistrust, and underdiagnosis will still show up in how data is recorded and interpreted.

I asked Jessica for a longer discussion to unpack these issues.

1. Why AI resembles targeted advertising?

Instead of treating people as individuals, AI systems often cluster patients into demographic or behavioral categories resembling how advertisers target consumers based on attributes rather than personhood. Because AI lacks semantic comprehension and cannot understand complex, contextual expressions like “I don’t feel like myself” or “I’m in pain” it makes it poorly suited to genuine personalization.

The open question here is: We know AI isn’t perfect. If we accept the thesis that it resembles targeted advertising and cluster people based on available data, is this bad? Is it making care worse?

Dr. Morley believes it might. People with fewer digital records (e.g., marginalized populations) are poorly represented in the datasets used to train AI systems. Therefore, AI doesn’t “treat” them but it treats a partial data shadow that may not represent them accurately at all. The clinical problem therefor is that over-reliance on this model could lead to misdiagnosis, underdiagnosis, or inappropriate care.

If you want to read about concrete example of AI causing harm, head to STAT News. In the last few years they extensively covered how the US largest health insurance company pressured its medical staff to cut off payments for seriously ill patients in lockstep with a computer algorithm’s calculations, denying rehabilitation care for older and disabled Americans, or how a study had found that Epic’s artificial intelligence tool to predict sepsis, a deadly complication of infection, was prone to missing cases and flooding clinicians with false alarms. Among the latest research, Declan Grabb, a forensic psychiatry fellow at Stanford and Max Lamparth, a postdoctoral fellow at the Stanford Center for AI Safety and the Center for International Security and Cooperation shared research about the use of chatbots for mental health and found that all but one language model was unable to reliably detect and respond to users in mental health emergencies.

2. Let’s talk about bias

It’s easy to think that by getting more diverse data sets, AI accuracy problems will be solved. Here, Dr. Morley warns of two things, we don’t often think about. In healthcare, “bias” isn’t always a bad word. Accounting for biological differences across sexes or ethnicity may be essential for effective treatment. The goal is transparent, justifiable bias, not neutrality. By focusing solemnly on creating a "perfect" data representation of everyone risks surveillance creep, meaning more data collection, more invasive monitoring, all in the name of fairness.

Bias should be seen as a diagnostic tool for identifying where systems fail patients, and be managed with warning labels. Which brings us to regulation.

Current ideas for global regulation for AI in healthcare

Up until recently, Coalition for Health AI (CHAI) seemed to be leading the way in regulating AI, through a network of quality assurance labs to evaluate AI models in healthcare. CHAI wanted AI innovators to provide some sort of AI food labels that would include information about AI model, data sources and more. However, end of May 2025, CHAI pivoted from assurance labs to “training assurance resource providers”, as reported by STAT news. This in essence means, that instead of governing assurance labs that provide credibility and accountability for assessing AI solutions, CHAI shifted towards empowering organisations to assess solutions themselves.

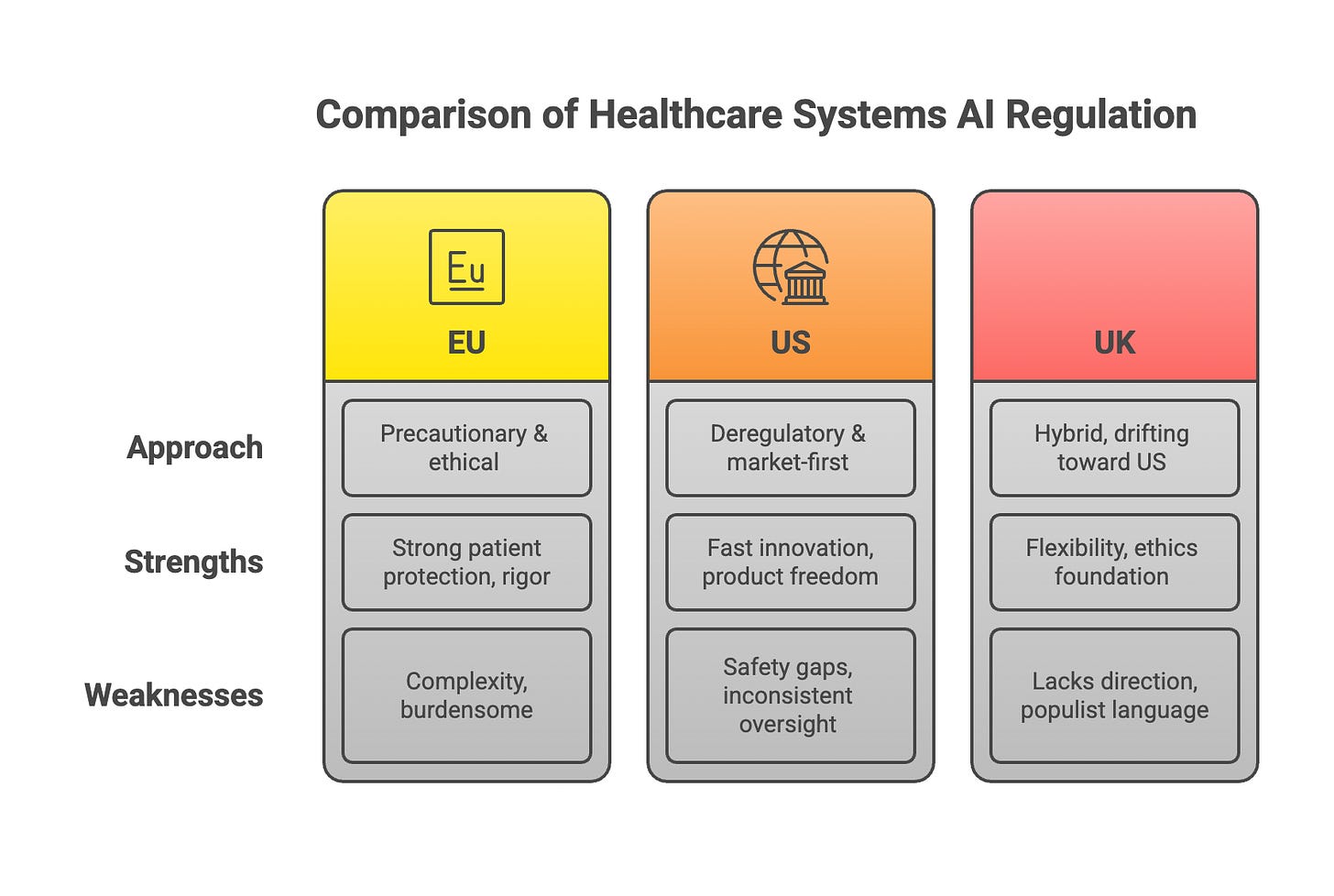

In the US a lot has changed with the current administration, with the focus on speed of innovation and deregulation of AI, not just in healthcare. The FDA is becoming both a power‑user of generative AI and a lighter‑touch regulator of external AI products. This is how some regulations compare based on Dr. Morley’s research:

Can we build a global regulatory network with an early warning system?

Beyond the US, HealthAI, a global non-profit that champions responsible AI in Health, is trying to build a global collaborative system for AI regulation. The agency works with governments to help them set up appropriate governance structures to enable the adoption of AI for good.

“ One question, I ask myself many times is how ethical is it that a technology that can improve outcomes is not being put in the market because we haven't been capable to put in the right governance in place, and that's happening today,“ says Health AI CEO Ricardo Baptista Leite, a Portuguese medical doctor, manager, author, university professor, analyst and politician. The key challenge in his eyes is the financing equation. If the payers, the governments and the insurance companies are not going to chip in the payment models, scalability will be an issue.

Health AI has several ideas for managing AI globally, with heavy reliance on governments. Rather than relying on external actors, Ricardo Baptista Leite advocates for investment in sovereign capacity, which includes building local regulatory and technical expertise to assess, validate, and monitor AI tools. He also emphasizes the importance of collaborative governance, encouraging countries to participate in global initiatives like Health AI’s regulatory network and community of practice, where shared knowledge and real-time incident data can strengthen oversight.

To make adoption viable, governments must learn to evaluate AI’s clinical and economic impact and establish evidence-based reimbursement pathways. Crucially, Ricardo insists that regulation must be adapted to each country's unique context—avoiding rigid, imported frameworks that ignore cultural and structural realities.

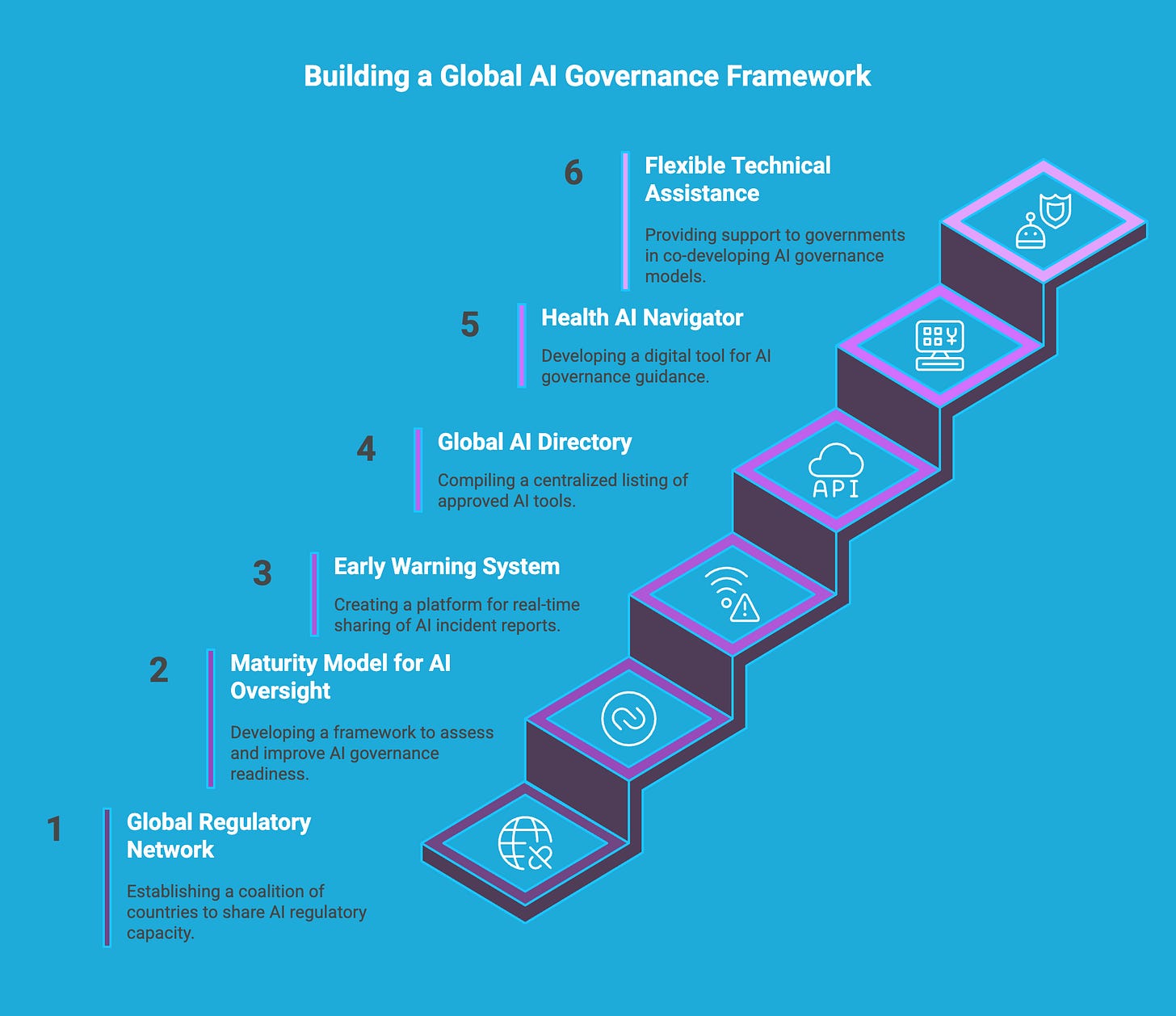

Health AI’s Vision for Governance

Health AI envisions a globally coordinated but locally adaptable model of AI governance in healthcare, centered on trust, transparency, and scalability.

Key elements include:

Global Regulatory Network: A coalition of 10 "pioneer countries" (to expand further) working together to build and share AI regulatory capacity, tools, and best practices.

Maturity Model for AI Oversight: A framework—based on WHO models—to help countries assess and improve their readiness to govern AI, from incident tracking to market approval.

Early Warning System: A platform to share post-market incident reports across countries in real time, improving safety and transparency across borders.

Global AI Directory: A centralized listing of AI tools approved by country regulators, helping governments, innovators, and payers understand what technologies are validated and where.

Health AI Navigator (coming soon): A digital tool tailored to different audiences (innovators, regulators, policymakers) offering implementation-oriented guidance on AI governance, reimbursement, and data management.

Flexible Technical Assistance: Health AI works as a partner, not a policymaker, helping governments co-develop governance models without imposing rigid structures.

“One thing that in a way breaks my heart sometimes is seeing some countries saying to us, listen, we're not ready yet to address this challenge. Those are the countries that need to step up first, because if they're too far behind, the longer they wait, the harder it is to keep up and the technology is moving in the speed that while it can be either the greatest equalizer of our time, elevating people out of poverty towards better health, or it can be the greatest divider leaving an important part of the population behind,” Ricardo concludes.

So how harmful is AI in healthcare?

My current patient journey has reminded me that while we often speak about patient-centricity and interdisciplinary care as the north stars in healthcare, we’re still far from seeing those principles fully realized in practice.

I remember a doctor colleague from my time in healthcare IT once saying, “Doctors are trained to make fast decisions.” But as healthcare systems become increasingly strained, and clinicians are pushed to move even faster, more responsibility, more project management, more cognitive load is shifting onto patients. Especially if you want the best care.

AI is often presented as the solution to these pressures. In fact, Anthony Chang, a leading authority in the field, argues that it will soon be unethical not to use AI in healthcare. In his view, the biggest challenge with AI in healthcare is that “mature AI tools aren't being used efficiently or effectively; tools that could reduce caregiver burden and improve outcomes.”

Personally, I see tremendous potential in AI particularly in research and system-level optimization. But if we want AI to be an equalizer rather than a divider, as Ricardo Baptista rightly cautions, we have serious work ahead.

We need to design systems that don’t just assume every patient is digitally literate, medically informed, and self-confident enough to challenge their clinician. We need AI that supports, not replaces, human connection. Otherwise, we risk creating a healthcare future that’s efficient on the surface but deeply inequitable underneath.

Where do you stand on the topic? Leave a comment and share your perspective.

This is a really important and well-written piece. It’s refreshing to see AI in healthcare discussed beyond the usual hype, especially around issues like bias, transparency, and unintended harm. Your points highlight why ethical design and accountability are just as critical as innovation. I’d be interested to hear how you see explainability and patient trust evolving as these tools become more embedded in clinical decision-making.

Great edition ... not sure I would have gone with the title "harmful" as it conveys author bias, IMO. However, I know and appreciate the ethos of getting to the truth. Thanks for sharing.